The growth projection by 2020 is almost 40ZB (zettabyte, 1021 bytes), the majority generated by human beings, followed by physical devices connected to the Internet. Another indicator that allows us to verify this trend is that the Big Data analytics and technology market grows at an annual rate of 20-30%, with an estimated world market of 50 billion euros by 2018.

But it is not simply the amount of data that makes the concept of Big Data unique. We tend to take this concept literally and associate it with a large amount of information, but, as we will see later on, a set of data must have more qualities in order to be considered Big Data.

DEFINITION OF BIG DATA AND ASSOCIATED PROBLEMS

We can talk about Big Data when large amounts of information are generated (Volume) very quickly (Velocity), with heterogeneous types of data (Variety). Recently, the industry has started to add a fourth ‘V’ to these three classic features (the three V’s): Veracity. Given that a large portion of information is directly generated by people, it is necessary that the origin of the data be granted the quality of veracity. There is no point in having a full set of data that is not reliable.

To a great extent, the rise in Big Data technologies has been caused by the social networks, as far as the volume and variety of data are concerned, and by the marketing sector, with regard to the possibilities of demonstrating the value of all the information being generated. Banking is another classic sector that generates and exploits Big Data. The study of the information on uses and habits that can be obtained from banking information makes it possible to design products tailored to customers, or to predict behaviours, such as outstanding payments, according to the correlation of the information available. Engineering firms are also beginning to identify cases of use for which the capacity of Big Data analytics is a competitive advantage.

Finally, the field of the IoT (Internet of Things) and Smart Cities should be noted.

The concept of a Smart City involves an intensive use of information technologies for collecting and processing the information that the city generates using the sensors deployed or other data sources, such as traffic cameras or any other source of unstructured information.

The four qualities that information must have in order to identify with

the concept of big data are: volume, velocity, variety and veracity

THE INDUSTRY’S APPROACH

Big Data projects cannot be efficiently addressed using traditional technologies. The requirements for storing and exploiting such quantities of data, with their qualities of velocity and heterogeneity, have forced the industry to design new technologies that make it possible to work with information in real time, including the previously mentioned characteristics of data volume and variety.

Among the different paradigms presented by the industry when tackling Big Data projects, we can highlight In-Memory (IMDB) technologies and Distributed Systems. In-Memory technology allows all of the information that is necessary to work to be loaded into a memory where the processing is much faster. Furthermore, solutions based on distributed systems are oriented towards parallel processing, allowing a complex problem to be broken down and sorted out by using different machines responsible for solving each part of the original problem. This breakdown allows for the use of affordable computers which together make up a large processing platform. The appearance of Open Source solutions such as Hadoop and Storm has supported this trend.

Additionally, there is a tendency to implement Big Data platforms using cloud services. The problem raised in Big Data projects is infrastructure dimensioning and scalability (growth potential). For this reason, these sorts of projects need to have an infrastructure that is elastic and which allows available resources to be expanded or reduced depending on our requirements at any given moment.

Solutions based on cloud services are going to take the place of private infrastructure contracting (on-premise), as this allows companies to be free from infrastructure installation and maintenance, in order to focus on tasks which contribute value to the project. We are no longer talking about acquiring machines (virtual or physical) where we have installed and configured our own solution, but rather about utilising the services we need at any given time, paying only for the processing time and the storage. For instance, if we need an automatic learning service where we can define a prediction algorithm that works with our own information, contracting the cloud service and only paying for the period of use is sufficient.

WHAT BIG DATA IS HIDING

Once we have this vast amount of data, how do we generate value from our information? There is a misconception that Big Data projects involve storing the existing information and applying a relatively complex technology to analyse what we can obtain. A Big Data project should begin prior to starting to compile information. It is necessary to be sure about the objectives that motivate the project and the type of information we need, as well as to consider all of the constraints involved in the collection and processing of this information.

As opposed to Big Data technology, classic Business Intelligence systems are based on the consolidation of the information which lets us carry out operations with that pre-calculated data. The new Big Data paradigm forces us, on one hand, to be able to analyse the flow of information in real time, and, on the other, to store the raw information. With regard to temperature sensors, for example, we need to record all measurements that the sensor has generated. It is not enough to simply control the average daily temperature, since having the additional information does not allow us to analyse details to be able to predict parameter behaviour or identify behaviour patterns. That is to say that we need to be able to store and analyse the information in its original form, or at a much lower level of detail than in traditional analytical systems.

BIG DATA IN ENGINEERING

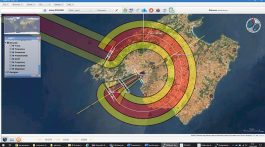

The areas of application are far-reaching, ranging from solutions for Smart Cities to automatic learning techniques for predictive maintenance activities. At Ineco we are aware of the importance and the possibilities Big Data technologies have in the field of engineering. Therefore, the Information Technologies division studies and exploits the characteristics of Big Data in different areas. In terms of Smart Cities we work in different fields, among which we can highlight the Smart CityNECO platform, for the integration of information from the various city services (mobility, environment, etc.) allowing for a correct management based on the control panels of the different services provided by the city. In addition, also within the field of Smart Cities, but more specifically concerning the axis of mobility (Smart Mobility), Ineco works in the study and optimisation of mobility in cities by creating prediction and simulation environments in real time that allow the optimal mobility regulation parameters in the different areas of the city to be determined. This solution is based on integrating the simulation models, as well as on the automatic learning techniques, by working with the information concerning the city’s state of mobility in real time.

A big data project must be sure about the objectives and the type of information we need, as well as consider the constraints involved in the collection and processing of this information

Within the field of infrastructure maintenance, predictive maintenance is based on anticipating the problem before it becomes a reality, or before its state loses the optimal conditions. This way, we lengthen the time between maintenance activities, thus improving availability while saving on costs. In this field, we develop predictive techniques using measurements from different parameters thanks to sensors which allow a relationship with their service life to be established. The difference with traditional techniques lies in automatically combining all information regarding their state, characteristics, exploitation and environmental conditions.

Within the area of mobility surveys and capacity, Ineco works on a mobile device survey platform that allows all information relevant to these types of studies to be compiled, including the responses provided by the user, location information provided by the GPS, etc. Additionally, with regard to the answers given using natural speech, we can conduct what is called a ‘Sentiment Analysis’ (opinion mining) which lets us identify the speaker’s attitude towards an issue.

Furthermore, we cannot forget that Big Data does not only consider alphanumeric information. Thus, another area of research focuses on image processing. The objective is to locate defects or objects in an automated way.

To sum up, we are undergoing a digital transformation which, combined with interconnection capacities, is exponentially increasing the amount of information generated. We live in the ‘Time of Data’ and the capacity to analyse that information is going to mark the difference in all fields of business.